We Audited 36 Dubai SaaS Companies for AI Visibility. Most of Them Are Invisible.

When buyers ask ChatGPT for software recommendations, most Dubai SaaS companies are not in the answer. We audited 36 of them to find out why.

The way buyers discover software is changing faster than most marketing teams realise. An year ago, the question was whether your company ranked on the first page of Google and today, a growing share of buyers are opening ChatGPT, Perplexity, or Gemini and asking questions like "what's the best payment gateway for my e-commerce store in the UAE?" and expecting a direct answer with named recommendations.

If your company isn't in that answer, you don't exist to that buyer.

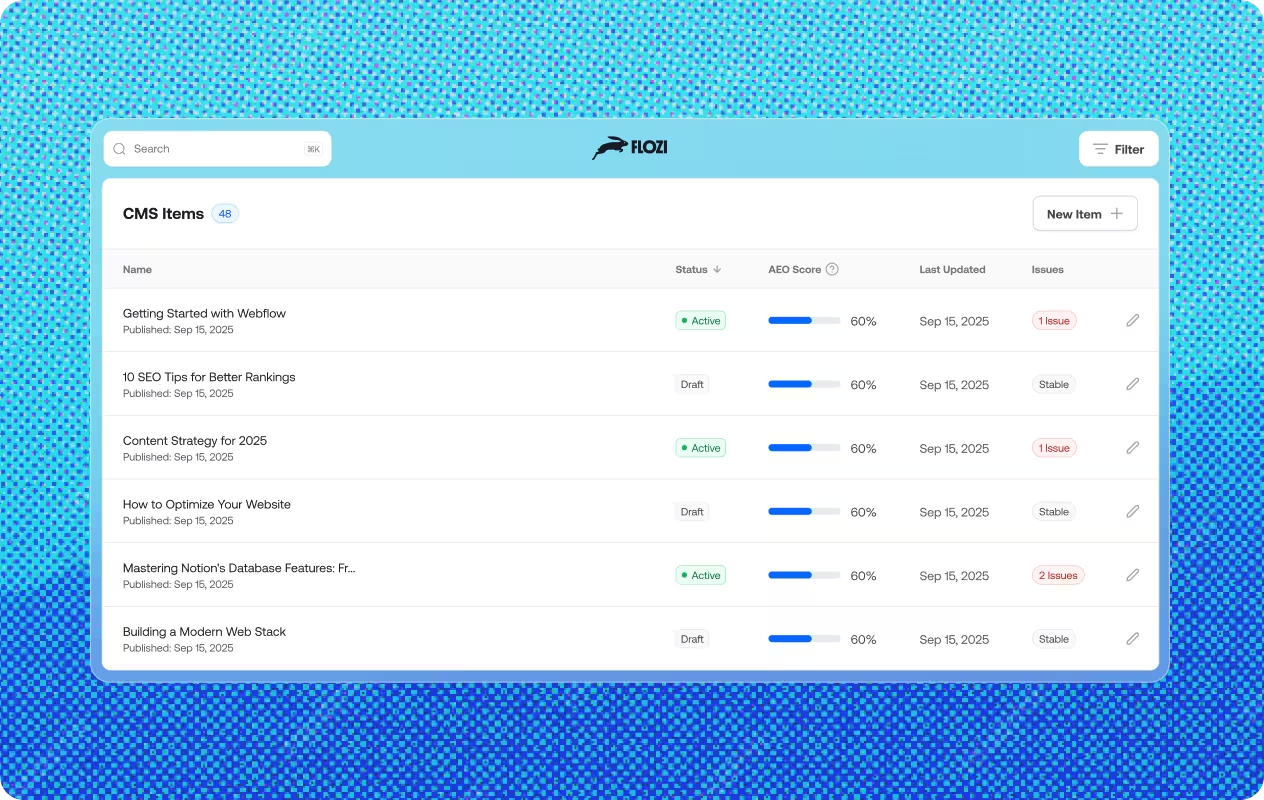

To understand how prepared Dubai's SaaS ecosystem is for this shift, Flozi audited 36 of the region's most active software companies across Fintech, PropTech, EdTech, Logistics, HealthTech, and more. Each company was scored across five pillars that determine whether an AI engine can find, understand, and confidently cite their content.

The results are striking.

The average AI readiness score across all 36 companies is 37.5 out of 100. Only 6 companies qualified as "Good." Eleven were rated Critical, meaning AI engines struggle significantly to cite them or cannot process their content at all. The remaining fourteen fall into a "Needs Work" middle ground that sounds acceptable but represents real missed opportunity every time a buyer asks AI for a recommendation.

This isn't a future problem. It is happening right now.

How We Measured AI Readiness

Before drawing conclusions from the data, it's worth explaining what we actually measured. AI readiness isn't a single metric, it's the product of five distinct capabilities, each of which contributes to whether an AI engine can find a company, understand what it does, and confidently recommend it.

The five pillars Flozi evaluates are:

AI Access: Can AI crawlers enter the site at all? Some companies have technical configurations like robots.txt rules, JavaScript-heavy rendering, or aggressive bot blocking that prevent AI engines from reading their pages entirely. This is step zero: if crawlers can't get in, nothing else matters.

Chunk Extractability: Is the content written in digestible, focused units? AI engines don't read pages the way humans do. They extract passages to answer specific queries. Long, flowing marketing paragraphs that blend several ideas together are essentially invisible. The engine can't lift a clean, relevant excerpt. Content needs to be broken into focused paragraphs where each one covers exactly one idea completely.

Answer Readiness: Can the content directly answer a question a user might ask? This measures whether the text is written in a way that resolves a query, not just describes a product. There's a meaningful difference between "We offer industry-leading payment solutions" and "Telr processes payments in 168 currencies and integrates with Shopify, WooCommerce, and Magento in under 30 minutes."

Query Alignment: Does the language on your site match the way real users phrase their queries to AI? If buyers ask "what's the best tool for managing freight procurement in the UAE" and your site talks about "end-to-end logistics optimisation," there's a gap. Query alignment is about speaking the buyer's language, not the brand's.

Schema Markup: Have you given AI engines structured proof of who you are and what you do? Schema markup is machine-readable data embedded in your site's code, think of it as a verified identity card for Search Engines, without it a search engine has to guess your company's category, offerings, and credibility from raw text alone, With it, that information is served directly to search engines which AI uses.

Each pillar is scored independently and combined into an overall AI readiness score. The data across all five reveals a clear picture of where Dubai's SaaS ecosystem is winning and where it is losing ground.

The Industry Leaderboard

Not every sector is equally prepared. When we average scores by industry, a clear hierarchy emerges and it contains at least one significant irony.

Marketplace and Logistics AI companies lead the pack. A freight logistics platform scored the highest in the entire study at 65, and a home services marketplace platform came in at 60. The common thread: both companies have content that naturally aligns with the way buyers ask for services. When someone asks AI "what's the best tool for managing freight procurement," a logistics platform that talks about freight procurement directly is far more likely to be cited than one that talks abstractly about "supply chain excellence."

Fintech sits in the middle of the table at an average of 42.7, respectable relative to the dataset, but with significant variance. The sector contains both some of the study's strongest performers and some of its most alarming failures.

At the bottom sit EdTech (26.7), AI Platforms (25.0), and Home Services (25.0). The EdTech result reflects a common pattern: companies in this sector tend to write for brand awareness rather than query resolution, resulting in content that is eloquent but not extractable.

The AI Platform result deserves its own section.

The AI Platform Irony

Companies that build AI tools for other businesses are, as a group, among the least AI-visible companies in the study. Both AI Platform companies in the dataset scored 25 or below lower than Fintech, Logistics, PropTech, and most other sectors.

This isn't coincidental. AI-first companies tend to obsess over their product and underinvest in the web infrastructure that allows other AI systems to find and recommend them. They understand AI deeply as a technology. They have not applied that understanding to their own digital presence.

The message for any AI platform marketing team is pointed: if you can't be found by AI, your credibility as an AI company is undermined before the conversation starts.

The Three Barriers Holding Everyone Back

Across 31 scorable companies, three patterns of failure appear repeatedly. Understanding them is the first step to fixing them.

.png)

Barrier One: The Schema Gap

The single most common weakness in the study is Schema Markup, cited as the primary failure point for 52% of companies audited. Schema markup, for those unfamiliar, is structured data embedded in a website's code that tells search engines exactly what a business is, what it offers, who it serves, and how to verify its identity. It's not content, it's metadata that speaks directly to search engines.

Despite being tech companies operating in a technology-forward market, 87% of the companies in this study have zero meaningful schema implementation. Only four companies returned any schema score at all, and of those, only one a real estate crowdfunding platform had implemented it in a way that made a measurable difference.

The irony is acute. These companies invest heavily in product development, user experience, and brand campaigns. Most have skipped the one technical signal that most directly tells AI engines "here is who we are, here is what we do, and here is why you should trust us."

Schema markup is also the fastest fix available. Adding basic Organisation, WebSite, and Product schema to a homepage can be done in an afternoon by a developer. The ROI is disproportionate to the effort.

Barrier Two: The Formatting Wall

The second most common weakness is Chunk Extractability, the ability of AI engines to lift clean, focused passages from a page and use them to answer user queries.

Eight companies in the study were flagged for this as their primary weakness. Their top gap, recorded verbatim in the audit data: "Your content is hard for AI to extract, try breaking it into shorter, focused paragraphs that each cover one idea completely."

This is a content strategy problem, not a technical one. Many of the companies flagged here have invested in good writing. The content is thoughtful, polished, and well-branded. It is also written for a human reader who will scroll, scan, and absorb the overall message not for an AI that needs to extract a precise, self-contained answer to a specific question.

The fix is a shift in how content is structured at the paragraph level. Every paragraph should be able to stand alone as a complete, useful unit of information. If a paragraph covers three ideas, it should be three paragraphs. If the opening sentence of a paragraph doesn't tell the reader what that paragraph is about, it needs to be rewritten.

This isn't a compromise on quality. Chunked, extractable content is also cleaner, clearer, and more readable for humans.

Barrier Three: The Identity Problem

The third barrier is the most human of the three, and in some ways the most avoidable.

Ten companies nearly one in three, were flagged by the audit for what we call the vague value proposition: their homepage does not clearly state what the company does in plain, simple language near the top of the page.

Several of the most well-known Fintech companies in Dubai were flagged for exactly this issue. Their audits returned feedback along the lines of: "Your homepage doesn't clearly explain what this company does near the top. Add a simple sentence like '[Company] is a [what you do]' or '[Company] helps [audience] do X.'"

This matters because AI engines establish context from the first content they encounter on a page. If the opening of a homepage is a brand tagline, a campaign slogan, or an abstract aspirational statement rather than a clear functional description, the AI may never correctly categorise what the company actually does. A human reading "Powering the future of financial inclusion" might infer payment processing. An AI reading that phrase has no clear anchor.

The fix is simple: the first meaningful sentence on your homepage should answer the question "what is this company, and who does it help?" One sentence. Plain language. At the top.

The Ghost Companies

Five companies in the dataset, representing sectors including Fintech, HealthTech, AI Platform, and CX returned no score at all. Not a low score. No score.

These companies were either technically configured in a way that prevented AI crawlers from accessing their content, or their site structure was sufficiently opaque that the audit tool could not retrieve enough usable data to generate a result.

This is the most severe category of AI invisibility. A company with a low schema score can be found and partially understood. A company that returns no score is, from the perspective of an AI engine, effectively absent from the web.

If your site has never been tested for AI accessibility, confirming that your robots.txt file doesn't inadvertently block AI crawlers and that your core pages render their content in plain HTML not exclusively via client-side JavaScript, is a necessary first step before any other optimisation.

What Good Actually Looks Like

Six companies in the study qualified as "Good." Looking at what they share reveals a clear playbook.

The jump between Critical and Good companies is not incremental. It represents a fundamentally different approach to content. The average chunk score for Critical companies is 5.4. For Good companies, it is 19.0, a 3.5x difference. Answer Readiness tells a similar story: 2.9 for Critical, 13.3 for Good.

This is not the result of minor tweaks. It reflects a content strategy that was built, consciously or otherwise, around extractability and query resolution rather than brand narrative.

The top performer in the study a freight logistics platform with a score of 65 owes its position primarily to Query Alignment. Its content naturally uses the language that freight buyers use when asking AI for recommendations. Terms like freight procurement, carrier management, and load matching appear throughout in contextual, question-adjacent ways.

A corporate learning platform came in second at 64, with strong Chunk Extractability. Its content is structured in a way that makes individual features, outcomes, and use cases easy to lift as standalone answers.

A real estate crowdfunding platform scored 61 and holds a notable distinction: it is one of only four companies in the entire study to have any schema markup at all, and the only one where schema contributed meaningfully to the overall score.

Best in class by pillar:

A payments infrastructure company leads on Chunk Extractability, its technical documentation is already written in the precise, focused units that AI prefers. A proptech company leads on Query Alignment, its content most closely mirrors the way buyers ask AI about real estate investment tools. A digital health platform leads on Answer Readiness, its content is the most directly useful for resolving specific user queries.

These companies didn't necessarily set out to be AI-visible. But the structural choices they made clear language, focused paragraphs, query-oriented copy happen to be exactly what AI engines reward.

The 8/15 Ceiling

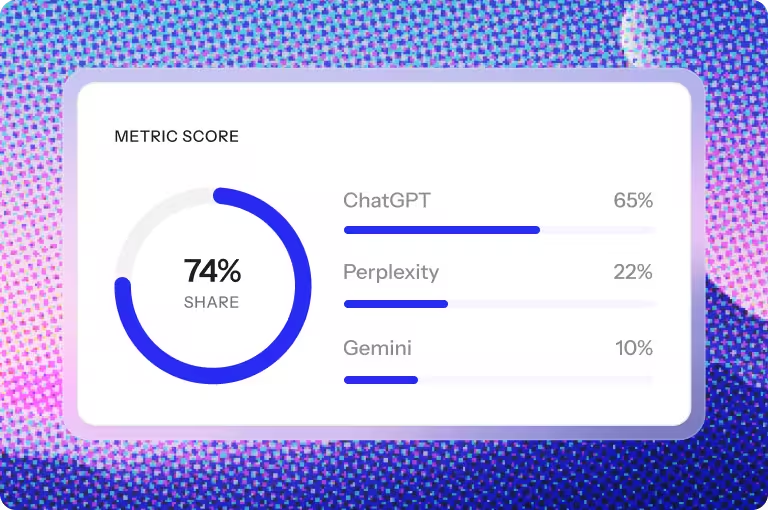

One of the more subtle findings in the data is a clustering effect among top performers.

Across 15 test queries run against each company, the maximum citation-ready count returned by any company in the study is 8. Not 10, not 12 — 8. This ceiling appears across companies of different sizes, sectors, and overall scores. A Fintech company scoring 56 hits 8/15, so does a logistics platform. So does a real estate platform.

This plateau is not a coincidence. It reflects a structural limit: content and query alignment can get a company to 8 citation-ready queries, but breaking through that ceiling requires the technical layer schema markup and improved answer readiness and that the vast majority of companies have not yet addressed.

The practical implication is that most "Good" companies in Dubai's SaaS ecosystem are operating at roughly half their potential AI citation capacity, not because their content is weak, but because they haven't implemented the verification and structure signals that would allow AI engines to use them more confidently.

The 8/15 ceiling is not a wall. It's a known gap with a known solution.

Three Things a Dubai SaaS CMO Should Do This Week

The data points to a clear, prioritised action list. These are not long-term strategic initiatives,they are executable improvements that can move a company's AI readiness score meaningfully within weeks.

One: Implement schema markup on your homepage. Start with Organisation, WebSite, and if applicable, SoftwareApplication schema. This is the highest-leverage fix available to the 87% of companies that currently have none. It requires a developer for an afternoon and addresses the most common failure point in the entire study.

Two: Rewrite your homepage hero with a functional definition. The first sentence a visitor and an AI crawler encounters should answer: what is this company, who does it serve, and what does it help them achieve? If your current hero copy doesn't answer all three, rewrite it. This single change improves both Query Alignment and the AI's ability to correctly categorise your company.

Three: Audit your paragraph structure for extractability. Take your three highest-traffic pages. Read each paragraph in isolation. If it covers more than one idea, split it. If the opening sentence doesn't state the paragraph's topic, rewrite it. If a paragraph requires context from the paragraph above it to make sense, restructure it. Aim for paragraphs of two to four sentences, each fully self-contained.

None of these require a rebrand, a new content strategy, or a six-month project. They require precise, targeted changes to existing assets — and they address the three most common failure patterns found across 36 of Dubai's most active SaaS companies.

The Opportunity Is Wide Open

The average AI readiness score of 37.5 out of 100 is not a verdict on the quality of Dubai's SaaS ecosystem. The companies in this study are, in most cases, well-built products with strong teams and genuine market presence. What the score reflects is a gap between where their digital presence currently sits and what AI engines need in order to confidently recommend them.

That gap is closeable. The companies that close it first will earn a meaningful, compounding advantage in how they're discovered, evaluated, and recommended not just today, but as AI-mediated discovery becomes the dominant mode of software evaluation.

The question is not whether this shift is coming. It is already here. The question is who in Dubai's SaaS ecosystem moves first.

Flozi audited 36 Dubai-based SaaS companies using its AI visibility scoring framework across five pillars: AI Access, Chunk Extractability, Answer Readiness, Query Alignment, and Schema Markup. 31 companies returned complete scores. 5 could not be scored due to technical accessibility issues. Sector averages exclude categories with fewer than two scorable companies.

Find out where your company stands

Flozi can run the same audit on your website scoring your AI readiness across all five pillars and identifying exactly where your highest-leverage fixes are.

Get your free AI visibility audit: flozi.io

Uncover deep insights from employee feedback using advanced natural language processing.