How AI Chooses What to Cite: Inside the Retrieval Model

Master the mechanics of RAG (Retrieval-Augmented Generation). Learn how ChatGPT and Perplexity rank sources and the critical factors that drive AI citations.

Most people think AI generates an answer and then looks for sources to back it up.

That’s backwards.

The process runs in the opposite direction. The AI finds sources first, evaluates them, and then builds its answer from what it found. The generation is the last step, not the first. Which means if the AI can’t find you during retrieval, nothing else matters. You’re optimizing for a stage you never reach.

Chapter 3 showed you where the value went when clicks dropped. This chapter shows you how the machine decides who gets that value. Not in theory. Through direct observation, pattern analysis, and testing we’ve done at Neue World across hundreds of queries and multiple platforms.

By the end of this chapter, you’ll understand the selection process well enough to audit your own content against it. That’s the goal. Not just knowledge. Diagnostic ability.

Retrieval vs. Generation: The Order Matters

Let’s start with a misconception that costs people months of wasted effort.

When you ask ChatGPT “what’s the best CRM for a small sales team,” many people imagine the AI thinking up an answer from its training, then finding sources to support what it already believes. Like a student writing the essay first and adding citations after.

That’s not how it works.

How the Process Actually Runs

Modern answer engines use a process called Retrieval-Augmented Generation, or RAG. The name is technical but the concept is straightforward: retrieve first, generate second.

Here’s the sequence:

Retrieve. The system searches for relevant sources. Depending on the platform, this means querying the live web, pulling from training data, or searching a proprietary index. The AI gathers candidate information before it forms any opinion.

Rank. The retrieved sources get evaluated and prioritized. Not all sources are treated equally. The system weighs relevance, authority, freshness, and several other signals to decide which sources deserve the most attention.

Generate. Only now does the AI construct its response, synthesizing information from the top-ranked sources into a coherent answer.

This order is everything. It means the retrieval step is the gatekeeper. If your content doesn’t make it through retrieval, it doesn’t matter how good it is. The AI will never see it, never evaluate it, and never cite it.

Why This Changes Your Priority

In SEO, you could have mediocre technical foundations and still rank if your content was exceptional. The content could compensate for the infrastructure.

In AEO, that doesn’t work. If your content isn’t retrievable, it’s invisible. Technical accessibility isn’t a nice-to-have. It’s the entry ticket. The best article ever written about “how to calculate customer lifetime value” is worthless to the AI if it can’t crawl it, parse it, or find it in its index.

This is why Pillar 1 (Inclusion) from Chapter 2 comes first. Not because it’s the most important strategically, but because everything else depends on it.

How Each Platform Finds Sources

Here’s something that complicates AEO strategy: not every answer engine retrieves sources the same way. The differences are significant enough that optimizing for one platform doesn’t automatically optimize for all of them.

Google AI Overviews

Google draws from its own search index, the same massive database that powers traditional Google Search. This means the signals that help you rank organically (backlinks, domain authority, page experience) also influence whether you appear in AI Overviews. If you rank well in Google already, you have a structural advantage here.

But there’s a catch. Google AI Overviews heavily favor sources that provide direct, concise answers to the specific query. A page that ranks #1 for a broad keyword might get passed over if a page ranking #4 has a cleaner, more extractable answer to the exact question being asked.

Perplexity

Perplexity searches the live web in real time for every query. It doesn’t rely primarily on training data. It runs actual web searches, reads the results, and synthesizes them. This makes it the most transparent answer engine for AEO, because it cites inline sources consistently and you can see exactly which pages it pulled from.

For Perplexity, being indexable and current matters enormously. Content that was published or updated recently tends to get prioritized. Perplexity also seems to favor sources with clear structure (headers, lists, tables) that make information extraction easier.

ChatGPT (With Search)

ChatGPT’s search functionality uses Bing’s index as a foundation, supplemented by other sources. When a user’s query triggers the search feature, the system pulls live web results and incorporates them into the response alongside what the model already knows from training data.

This creates an interesting dynamic. For topics well-covered in training data, ChatGPT might generate an answer from memory and cite fewer external sources. For recent or niche topics, it leans more heavily on live search results. The implication: if you’re in a fast-changing field, getting indexed by Bing matters as much as getting indexed by Google.

Claude (With Search)

Claude’s web search implementation works similarly to ChatGPT’s, retrieving live web results when needed. It tends to provide inline citations and favors sources that are authoritative and clearly written.

What This Means for Your Strategy

The practical takeaway is that you can’t optimize for “AI” as a monolith. Each platform has retrieval preferences:

The minimum viable AEO strategy: make sure you’re indexable by both Google and Bing, keep your content current, and structure it so any system can extract answers cleanly. That covers your bases across platforms.

The advanced strategy: study which platforms your specific audience uses and weight your optimization accordingly.

What Increases Citation Likelihood

This is where the chapter gets concrete. We analyzed sources that consistently appear in AI-generated responses across platforms and tracked what they have in common. The patterns are clear enough to act on.

I’ve organized the factors into tiers based on how strongly they correlate with being cited.

Critical Factors (Present in 90%+ of Cited Sources)

Topical Relevance and Direct Answers

This sounds obvious, but the specifics matter. AI systems don’t just match keywords. They evaluate how well your content answers the actual question being asked. A page titled “Everything You Need to Know About Email Marketing” is less likely to get cited for “what’s a good open rate for B2B emails” than a page that directly addresses B2B email open rates with specific benchmarks.

The AI is looking for precision. It wants the tightest match between what the user asked and what your content provides. This favors pages that answer specific questions clearly over pages that cover broad topics generally.

Domain Authority and Trust Signals

Across every platform we tested, sources with established domain authority got cited more often. This doesn’t mean you need a DA of 90. But it means a brand-new blog with no backlinks, no brand mentions, and no history will struggle to get cited, even if the content is excellent.

The trust signals AI systems appear to weight most heavily: backlink quality (not just quantity), brand mentions across the web (even without links), consistent publishing history, and presence on platforms the AI already trusts (Wikipedia references, news mentions, industry citations).

Content Freshness

For any topic where information changes, freshness is critical. We found that for dynamic topics like tax law, software comparisons, or industry statistics, sources updated within the last 6-12 months were cited at dramatically higher rates than older content covering the same material.

For genuinely evergreen topics (how photosynthesis works, the Pythagorean theorem), freshness mattered less. But here’s the thing: fewer topics are truly evergreen than most content teams assume. Even “how to write a business plan” has evolved with AI tools and remote work trends.

High-Impact Factors (Present in 60-90% of Cited Sources)

Clear Authorship and Credentials

Sources with visible author information, especially authors with demonstrable expertise, got cited more often than anonymous or brand-only content. This was particularly pronounced for YMYL (Your Money, Your Life) topics like health, finance, and legal content.

The pattern suggests that AI systems are evaluating E-E-A-T signals (Experience, Expertise, Authoritativeness, Trustworthiness), not just as an SEO concept but as an actual input to source selection. An article about tax deductions written by a credentialed CPA with their bio visible on the page gets selected over a better-written article by an unnamed “content team.”

Structured, Extractable Formatting

AI systems need to parse your content before they can cite it. Sources with clear heading hierarchies, well-organized sections, tables, and lists were cited more consistently than sources with the same information presented as unbroken prose.

This isn’t about keyword-stuffing headers or manufacturing FAQ pages. It’s about genuine structural clarity. When the AI is scanning a 3,000-word article for the specific passage that answers a user’s question, clear H2s and H3s function like a table of contents. They help the AI find what it needs quickly.

Original Data and Primary Research

This one surprised us by how strongly it correlated. Sources that contained original data (surveys they conducted, experiments they ran, benchmarks they measured, case studies with real numbers) were cited at significantly higher rates than sources that synthesized existing information.

The AI can detect the difference between “According to a recent study, 60% of marketers…” and actually being the source of that study. Primary sources get cited. Summaries of primary sources get skipped in favor of the original.

Moderate-Impact Factors (Present in 30-60% of Cited Sources)

Schema Markup

Sources with schema markup (FAQ schema, HowTo schema, Article schema) were cited more often than those without, but the effect was less dramatic than you might expect from reading AEO advice online. Schema helps the AI understand your content structure, but it doesn’t compensate for weak content or low authority.

Think of schema as a translation layer. It makes your content easier for the AI to parse, which increases the probability of selection. But the AI still evaluates what it finds after parsing. Schema without substance is like a beautifully organized empty library.

Content Length and Depth

Longer, more comprehensive content was cited more often, but with diminishing returns past a certain point. Content in the 1,500-3,000 word range for informational queries seemed to hit the sweet spot. Shorter than that and the AI may not find enough substance to cite. Longer than that and the key information may be harder to extract.

The real factor isn’t word count. It’s information density. A 2,000-word article that covers a topic thoroughly will outperform a 5,000-word article that takes 3,000 words to get to the point.

What Kills Your Citation Chances

Understanding what helps is only half the picture. We also analyzed sources that should be getting cited (high authority, good content, strong rankings) but weren’t. The patterns here were just as instructive.

Technical Blockers

Blocking AI Crawlers

This is the most common and most fixable citation killer. Many sites have robots.txt rules that block AI crawlers without realizing it. Some block specific bots (GPTBot, ClaudeBot, PerplexityBot) deliberately. Others have legacy rules that accidentally prevent AI access.

If you’ve explicitly blocked AI crawlers, you’ve made a choice. But if you haven’t checked, do it now. A significant number of the “high authority, zero citations” sites in our analysis had this single problem.

JavaScript-Heavy Pages

Content rendered primarily through JavaScript is harder for AI crawlers to parse. If your key information only appears after JavaScript executes, some crawlers may never see it. Server-side rendering or static HTML for your most important content pages significantly improves retrievability.

Paywalls Without Structured Previews

Paywalled content presents a dilemma. The AI can’t read what it can’t access. Some publishers handle this well by providing substantial previews with structured data that gives the AI enough to cite. Others lock everything behind the wall and become invisible to AI systems entirely.

Content Blockers

Buried Answers

The single most common content problem: the actual answer to the user’s question appears in paragraph eight or nine, after a lengthy introduction, background context, and tangential information. AI systems scan for relevant passages. If your key insight is buried deep, the AI may not reach it, or it may find a competitor’s version of the same information presented more accessibly.

Lead with your answer. Provide context after. This isn’t dumbing down your content. It’s respecting both the AI’s extraction process and the human reader’s time.

Promotional Tone Over Informational Value

Content that reads like a sales pitch gets cited less than content that reads like genuine expertise. We found this pattern repeatedly: a company’s blog post about “why our product solves X” would get passed over in favor of a third-party review or an independent guide that happened to mention the same product.

AI systems seem to detect promotional intent and discount it. If your content’s primary purpose is selling rather than informing, the AI will find a more neutral source.

Outdated Information

This goes beyond freshness signals. We found sources that had recently updated their “last modified” date but still contained outdated statistics, defunct links, and references to tools or regulations that no longer existed. The AI detected the staleness despite the cosmetic update.

Genuine freshness means the information itself is current, not just the datestamp.

Authority Blockers

No Corroboration Across the Web

Sources that existed in isolation, with no mentions, citations, or references from other authoritative sites, were cited less often. The AI appears to use a form of consensus checking: if multiple trusted sources reference the same information, it’s more likely to be selected. If you’re the only one saying it and nobody else has acknowledged your claim, the AI treats it with more skepticism.

This is why distribution and presence (Layer 5 from Chapter 2’s system architecture) matters. Your content needs to exist in an ecosystem of references, not in a vacuum.

Conflicting Information

When your content contradicts the consensus established by multiple authoritative sources, AI systems become hesitant. This doesn’t mean contrarian content can’t get cited. But it needs even stronger authority signals to overcome the AI’s preference for consensus.

If you’re making a claim that goes against the grain, make sure your evidence is bulletproof and your credentials are visible. The AI will cite contrarian views from established authorities. It won’t cite them from unknown sources.

Why Authority Compounds in AI Systems

Here’s the part that should create urgency if nothing else in this chapter has.

AI citation isn’t static. It compounds. Sources that get cited early develop advantages that make future citations more likely, creating a feedback loop that’s difficult for latecomers to break.

The Citation Flywheel

When the AI cites your content, several things happen simultaneously:

Your brand gets mentioned to users. Some of those users search for you later, increasing your branded search volume. Google interprets rising branded searches as an authority signal. Your overall domain authority increases.

Other content creators encounter your work through AI. Some reference you in their own articles. Your backlink profile grows. The AI sees more external sources pointing to you. Your citation likelihood increases again.

The AI’s own model updates incorporate your prevalence. As you appear more frequently in the AI’s outputs and in the web content the AI is trained on, you become part of the AI’s baseline understanding of your topic. Future queries are more likely to surface you.

Each cycle reinforces the next. The brand that gets cited ten times this month is more likely to get cited twenty times next month. The brand that gets cited zero times faces the same odds next month.

The Winner-Takes-Most Distribution

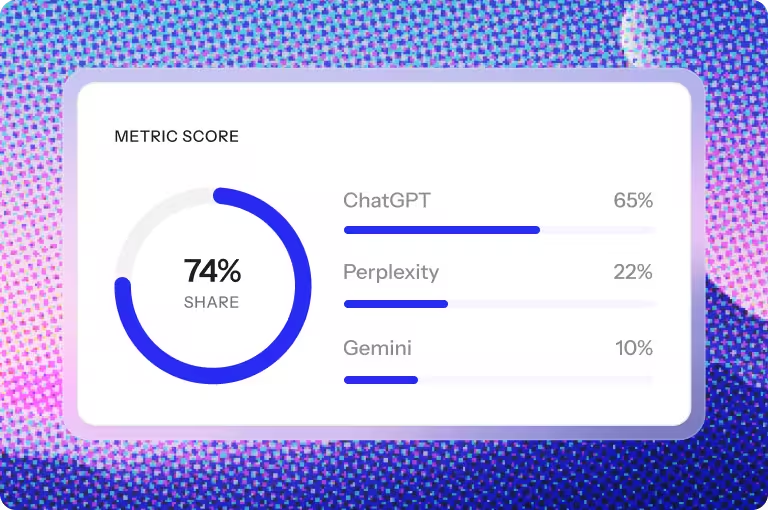

We tracked citation distribution across 20 competitive topics and found a pattern that mirrors what Chapter 1 described for organic search, but even more concentrated.

For most informational queries, citation follows a steep power law:

The #1 most-cited source captures 40-60% of citations across platforms. The #2 source captures 15-25%. Everyone else splits the remainder, with most sources getting cited once or not at all.

This concentration is more extreme than organic search rankings. In traditional SEO, positions 1 through 10 all got some traffic. In AI citations, there’s often a primary source, a secondary source, and then everyone else is invisible.

Cross-Platform Authority Transfer

Here’s the encouraging finding for early movers: authority appears to be portable across platforms. Sources that were heavily cited in Perplexity also tended to be cited in ChatGPT and Google AI Overviews. Not perfectly correlated, but strongly enough to suggest that the same underlying signals (domain authority, content quality, freshness, backlinks) drive selection across engines.

This means your AEO investment isn’t platform-specific. Building authority for one engine builds it for all of them. The signals are shared even though the retrieval methods differ.

What This Means for Timing

The compounding effect has a clear implication: starting AEO earlier creates a structural advantage that becomes harder to overcome over time. A competitor who begins optimizing for AI citations six months before you will have built citation momentum, authority signals, and brand recognition inside AI responses that you’ll need to outperform, not just match.

This isn’t a reason to panic. But it is a reason to stop treating AEO as something you’ll “get to eventually.”

Platform-Specific Citation Patterns

Not all answer engines are created equal when it comes to how and what they cite. Understanding these differences helps you prioritize.

Perplexity: The Most Transparent

Perplexity cites the most sources per response (typically 5-15 inline citations) and provides the clearest attribution. It’s the most “democratic” platform for citation, giving more sources a chance to appear. For brands building initial AEO momentum, Perplexity is often the easiest platform to get cited on.

Perplexity also tends to favor recent content more heavily than other platforms, making it particularly responsive to content updates and new publications.

Google AI Overviews: The Highest Stakes

Google AI Overviews reach the largest audience but cite fewer sources (typically 3-5 source cards). The competition for inclusion is fiercer, and Google’s existing search signals (backlinks, domain authority, page experience) weigh heavily. If you’re already strong in organic SEO, Google AI Overviews are your most natural AEO entry point.

ChatGPT: The Least Predictable

ChatGPT’s citation behavior varies the most between queries. Sometimes it provides multiple inline links. Sometimes it cites nothing at all. For queries answered from training data, it may not cite any external source. For queries triggering live search, it typically cites 2-5 sources.

This variability makes ChatGPT the hardest platform to optimize for directly, but also means that strong fundamentals (authority, content quality, Bing indexation) cover you when citations do appear.

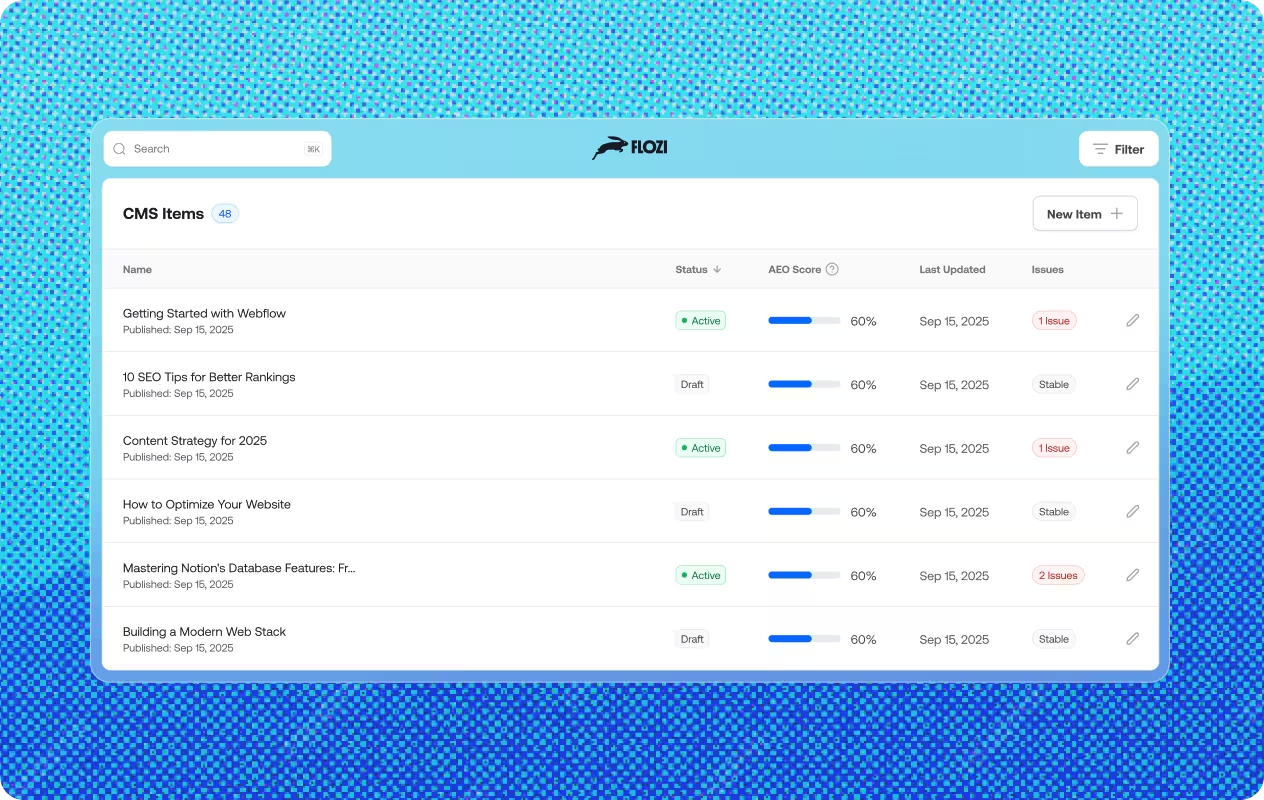

What to Do in Webflow for Each Platform

The theory above tells you why each platform behaves differently. This section tells you what to actually touch inside Webflow to act on it. These aren’t abstract best practices — they’re the specific settings, configurations, and content habits that move the needle for each engine.

ChatGPT: Get Into Bing’s Index and Stay There

ChatGPT’s search pulls from Bing’s index. If Bing hasn’t found you, ChatGPT won’t cite you — regardless of how well you rank on Google. Most Webflow sites are Google-first by default, which means Bing is often an afterthought. Fix that.

Bing Webmaster Tools. Submit your site to Bing Webmaster Tools and verify ownership. Once verified, submit your sitemap directly. Webflow auto-generates a sitemap at yourdomain.com/sitemap.xml — use that. Check the crawl reports periodically to confirm Bing is reaching your key pages and flag any access errors.

IndexNow setup. IndexNow is a protocol that lets you push URL notifications to Bing (and other supporting engines) the moment content is published or updated, rather than waiting for a crawler to find it. Webflow doesn’t have native IndexNow support, but you can trigger it via a Zapier workflow: when a new CMS item is published, the zap fires an IndexNow API call with the updated URL. This is especially valuable for collection pages — blog posts, case studies, service pages — where freshness directly affects ChatGPT citation likelihood.

Perplexity: Keep Content Current and Answers Obvious

Perplexity runs live web searches for every query and weights freshness heavily. Two things work against most Webflow sites: CMS content that goes stale quietly, and pages where the actual answer is buried in body copy.

Keep CMS content fresh. Build a habit of reviewing and re-publishing high-value CMS pages every 60–90 days — even if the core content hasn’t changed dramatically. Update statistics, swap in current examples, refresh any references that could date the piece. In Webflow, re-publishing a page updates its Last-Modified header, which Perplexity’s crawler uses as a freshness signal. A cosmetic update is better than nothing, but genuine information updates are what Perplexity’s AI actually rewards (as noted in the “Outdated Information” section above — the AI reads the content, not just the datestamp).

Direct-answer formatting. Perplexity’s system extracts passage-level answers, not pages. That means it needs to find your answer quickly. In Webflow’s Rich Text or CMS body field, restructure your key pages so the direct answer to the primary question appears in the first 1–2 paragraphs or under a clearly labeled H2. Use a “Bottom Line Up Front” structure: state the answer, then explain it. If you’re covering a list topic (“5 ways to reduce churn”), use Webflow’s built-in list elements rather than formatting lists as plain paragraphs — the semantic markup makes extraction cleaner.

FAQ sections per page. One of the highest-leverage things you can do for Perplexity (and all answer engines) is add a dedicated FAQ block to each key page — 3 to 10 questions that target the specific things your audience would actually ask about that topic. Keep each answer tight: 40–60 words, direct, no preamble. In Webflow, the cleanest implementation is a CMS collection for FAQs that you can reference across pages via multi-reference fields, so you write each answer once and surface it wherever it’s relevant. Pair this with FAQ schema (covered in the Google section below) and you’ve given every platform a pre-extracted Q&A layer to pull from.

Topic clusters over isolated pages. Perplexity consistently favors sources with depth and coverage, not single standalone articles. If your blog has ten unrelated posts, you’re competing for citations with ten different authority profiles. If those posts are organized as a cluster — one pillar page that covers the topic broadly, with supporting articles answering specific sub-questions — the AI reads you as an authority on the topic rather than a one-off source. In Webflow, structure this using CMS categories or tags to group related collection items, and use the pillar page to link explicitly to each supporting piece. Internal links between related pages are a signal, not just a UX choice.

Google AI Overviews: Canonical Tags and Schema

Google AI Overviews draw from Google’s own search index, so the usual SEO fundamentals apply. Two Webflow-specific levers have the most direct impact on AI Overview inclusion: canonical clarity and schema markup.

Canonical tags. Google AI Overviews penalize content fragmentation. If the same information lives across multiple pages (paginated blog archives, tag pages, duplicate service pages with slightly different URLs), Google may split authority across variants instead of consolidating it on the page you actually want cited. In Webflow, set canonical tags explicitly on every CMS collection item and static page under Page Settings → SEO → Canonical URL. For CMS collections, use Webflow’s built-in canonical field to point to the primary URL. For any pages that are filters or variations of a core page, canonicalize them back to the original.

Schema markup. Schema helps Google AI Overviews understand what type of content a page contains and extract structured answers. Webflow doesn’t add schema automatically, so you’ll need to add it via a custom code embed in the <head> of each page or page template. At minimum, add:

- Article schema on all blog posts and editorial content (name, author, datePublished, dateModified)

- FAQ schema on any page that has a question-and-answer structure — this directly feeds Google’s ability to pull discrete Q&A pairs into AI Overviews

- HowTo schema on step-by-step guides or process pages

- Organization schema on your homepage (name, url, logo, sameAs for your social profiles)

Use Google’s Rich Results Test to validate after adding. In Webflow, CMS-driven schema can be templated using dynamic fields inside the custom code embed, so you don’t need to hand-write it for every post.

Foundational Setup: What to Do Regardless of Platform

These aren’t platform-specific — they’re the baseline that makes everything else work.

Allow AI crawlers in your robots.txt. This sounds obvious, but it’s the most common silent citation killer. The bots you need to explicitly allow are GPTBot (ChatGPT), PerplexityBot (Perplexity), and Google-Extended (Google AI products). Webflow’s default robots.txt doesn’t block these, but custom rules — especially on older sites or sites migrated from other platforms — sometimes do. Check your robots.txt at yourdomain.com/robots.txt and confirm none of these user-agents are disallowed. If you’re intentionally withholding certain pages from AI crawlers, be precise: block specific paths, not the entire bot.

Build a Resources, Guides, or Glossary section. AI engines heavily favor sites that demonstrate topical depth across multiple pages. A dedicated hub — whether you call it Resources, Guides, or Glossary — gives you a place to build out the cluster content mentioned above, and signals to crawlers that your site has breadth on a topic, not just a single article. In Webflow, this is a CMS collection with its own category template. Build it once, populate it over time.

Create an LLM info page. Some teams are now adding a structured page — sometimes called an “about this site” or brand summary page — specifically designed to give AI systems a compact, accurate description of who they are, what they cover, and why they’re authoritative. Think of it as a robots.txt for your brand identity: a single URL the AI can reference to understand your site’s purpose, areas of expertise, and credentials. In Webflow, a static page with clean semantic HTML and Organization schema is all you need. Keep it factual, specific, and unpromotional.

How to Tell If It’s Working

Most teams add AEO tactics and then never close the loop. They don’t know if anything changed because they’re not looking in the right places. Before The Takeaway, here’s how to actually check.

Search Perplexity directly. Go to perplexity.ai, enter the queries you’re targeting, and look at the “Sources” panel on the right. If your domain appears there, you’re being cited. Do this for your five to ten most important keywords and note which pages Perplexity is pulling. This is the fastest feedback loop available — you don’t need a tool.

Test ChatGPT the same way. Open ChatGPT with search enabled (the globe icon), ask your target queries, and check which URLs appear in the citation list below the response. ChatGPT’s citations are less consistent than Perplexity’s, but they’re visible enough to tell you whether you’re in the index and being selected.

Track referral traffic in your analytics. In whatever analytics tool you’re using with Webflow (GA4, Plausible, Fathom), look at referral sources. Traffic from chatgpt.com and perplexity.ai is direct evidence of citations driving visits. These numbers tend to be small early on, but their presence confirms you’re appearing in answers. Absence over 60–90 days of optimized content is a signal to revisit your technical setup.

Check Bing Webmaster Tools for crawl coverage. Since ChatGPT runs on Bing’s index, Bing’s crawl reports tell you whether your pages are being seen. Look at the URL Inspection tool for your key pages. If Bing hasn’t indexed something, ChatGPT won’t cite it, regardless of how well it performs on Google.

The Takeaway

The AI doesn’t choose sources randomly. It follows a structured process: retrieve, rank, generate, cite. Each stage has observable patterns, and each pattern suggests specific actions.

If you take nothing else from this chapter, take these five things:

First, be findable. If AI crawlers can’t access your content, nothing else matters. Check your robots.txt. Ensure you’re indexed by both Google and Bing. Fix any technical blockers.

Second, be the primary source. AI rewards original information. If you’re summarizing what others have published, the AI will cite the original, not you. Create data, run experiments, publish research, share proprietary insights.

Third, be clear. Lead with your answer. Structure your content with clear headings. Make it easy for the AI to find and extract the specific information that answers the user’s question.

Fourth, be credible. Show your expertise visibly. Author bios, credentials, institutional affiliations, citations of peer-reviewed sources. The AI evaluates trust signals. Make yours obvious.

Fifth, start now. Citation compounds. The early movers build flywheel advantages that are expensive and time-consuming to overcome. Every month you delay is a month your competitors are building citation momentum without you.

The next chapter takes everything you’ve learned about how the machine works and translates it into a complete AEO strategy. What to do first. How to structure your content. How to measure what’s working. The mechanics are done. Now we build.

Get your content health score across ChatGPT, Perplexity, Gemini & Claude in 60 seconds.

Uncover deep insights from employee feedback using advanced natural language processing.

.avif)