Trust Is the Ranking System: E-E-A-T for Answer Engine Optimization

Entity-First Optimization showed you how to organize content around entities, how to build topic surface area, and how to connect the pieces so AI systems can navigate your knowledge. That's the structural foundation.

But here's something we kept running into while testing entity coverage strategies: sites that did everything right structurally, and still got zero citations.

Complete coverage. Clean hub-and-spoke architecture. Good internal linking. Strong writing. Nothing. The AI skipped right over them and cited someone else, often someone with thinner content on the same topic.

When we dug into what separated the cited sources from the ignored ones, the pattern was consistent. It wasn't depth. It wasn't structure. It wasn't even how well the content answered the question.

It was trust.

The cited sources had visible, verifiable trust signals. The ignored ones didn't. And no amount of content quality could overcome that gap.

That's what this chapter is about. In answer engine optimization, trust isn't one factor among many. It's the gatekeeper. Everything else you've learned in this series, entity coverage, content structure, the answer-first framework, only works once you've crossed a trust threshold that the AI considers acceptable.

Let's talk about what that threshold looks like and how to cross it.

The Trust Threshold: Why Great Content Gets Ignored

In traditional SEO, you could sometimes muscle past weak authority with exceptional content and smart technical optimization. A new blog with a brilliantly written guide could outrank established players if the on-page SEO was sharp enough.

That doesn't work in AEO.

AI systems are fundamentally risk-averse. When ChatGPT, Perplexity, or Google's AI Overview synthesizes an answer and attributes it to a source, that system is putting its own credibility on the line. If the source is wrong, the AI looks wrong. And for the companies building these systems, mistakes carry real costs: misinformation, legal liability, user trust erosion.

So the AI defaults to safe choices. Established authorities. Recognized institutions. Sources with visible, verifiable credentials.

We tested this directly. We tracked 200 queries across health, finance, technology, and general knowledge topics, comparing which sources got cited against their content quality and authority signals.

The pattern was striking. Sources from Mayo Clinic, Investopedia, and official .gov sites were cited consistently, even when their content was competent but not exceptional. Meanwhile, independent creators with genuinely excellent content on the same topics, comprehensive, well-researched, clearly written, were cited rarely or not at all.

The difference wasn't subtle. It looked like a cliff. Below a certain level of perceived authority, content quality barely mattered. Above that level, content quality started to differentiate. But below it? Invisible.

We started calling this the Trust Threshold. Think of it as a minimum entry requirement. Below it, the AI doesn't evaluate your content at all. It doesn't matter how good your answer is if the system never considers you as a potential source.

This is the single biggest shift from SEO to AEO. In SEO, authority was one of many ranking factors. In AEO, trust is a prerequisite. Without it, nothing else you do matters.

What Trust Signals AI Systems Actually Look For

If trust is the gatekeeper, the obvious next question is: what does the AI consider trustworthy?

We studied 100 sources that were frequently cited across Perplexity, ChatGPT, and Google AI Overviews. We documented every visible signal on those pages and compared them against 100 sources that covered similar topics with similar quality but were rarely or never cited.

The results fell into a clear hierarchy.

Critical Signals (Present in 90%+ of Frequently Cited Sources)

Author bylines with credentials. Not just a name. A name with a visible qualification, title, or institutional affiliation. "Dr. Sarah Chen, Board-Certified Dermatologist" beats "by Staff Writer" every time, especially on YMYL (Your Money, Your Life) topics like health, finance, and legal guidance.

Transparent About pages. The cited sources almost universally had detailed About pages that explained who they are, what they do, and why they're qualified. Not a paragraph of marketing copy. Actual transparency: team members, editorial processes, company history, contact information.

Sources cited within the content. This one surprised us with how consistent it was. Highly cited pages almost always cited their own sources, linking to peer-reviewed research, official data, or primary documents. The act of citing credible sources appears to signal rigor to the AI.

Publication and update dates. Visible timestamps telling the reader (and the AI) when the content was written and when it was last reviewed or updated.

Important Signals (Present in 60-90%)

Institutional affiliation. Content from recognized organizations, universities, government agencies, established media outlets, and professional associations was cited at significantly higher rates than content from unaffiliated individuals. We call this the Institutional Halo Effect. The institution's brand transfers credibility to the content, even when the individual author isn't well-known.

Editorial standards disclosure. Pages that described their editorial or fact-checking process, even briefly, appeared more often in citations. Think of the "Editorial Policy" or "How We Review" links you see on major health and finance sites.

Quality backlink profiles. Not just volume. The cited sources tended to have backlinks from other authoritative sources in their field. This isn't new to anyone who understands SEO, but it plays a slightly different role in AEO. Rather than boosting rankings directly, backlinks from credible sources appear to contribute to the AI's overall trust assessment of your domain.

Helpful but Not Required (Present in 30-60%)

Social media verification and presence. Verified accounts, active professional profiles, and consistent cross-platform identity. These helped but weren't make-or-break.

Wikipedia presence. Being mentioned on Wikipedia, especially having a dedicated page, correlated with higher citation rates. But this is more of a symptom of established authority than a signal you can easily engineer.

Awards and industry recognition. Helpful for reinforcing authority, but not something the AI appears to weight heavily on its own.

The takeaway from this analysis: the critical signals are things any organization can implement. Author bylines, credentials, transparent About pages, source citations, and timestamps. These aren't expensive or technically complex. They're just rarely prioritized.

Proof Assets: What Actually Moves the Needle

Beyond the baseline trust signals, certain types of content act as trust accelerators. We call them proof assets, and they work because they demonstrate expertise rather than just claiming it.

Original Data and Research

This is the single most powerful trust asset. When we studied content that was cited as a primary source (the source the AI references directly rather than one of several supporting sources), original data was the common thread.

Sources that generated their own research, whether through surveys, experiments, proprietary datasets, or novel analysis, were cited at dramatically higher rates than sources that synthesized existing information. The AI treats primary sources differently from aggregators. If you've produced the data, you're the authority. If you're summarizing someone else's data, you're a secondary source, and the AI may just go to the primary source instead.

The hierarchy we documented looks like this, from highest citation impact to lowest:

Tier 1: Original research, proprietary data, controlled experiments.

Tier 2: Original case studies with real numbers, expert interviews, field testing results.

Tier 3: Aggregation and novel analysis of existing data.

Tier 4: Synthesis and summarization of other sources.

You don't need a research lab. A survey of 200 customers, a documented A/B test, an analysis of your own platform's data. These all count as original research that the AI values as primary source material.

Case Studies with Specifics

We tested this with two versions of the same content. Version A gave general advice: "Companies should implement X strategy to improve Y outcome." Version B gave the same advice but included an original case study: "Company Z implemented X strategy over 90 days and saw Y improve by 34%, with specific breakdowns by channel."

Over 60 days of tracking, Version B was cited roughly 3x more often. And when it was cited, it was more frequently the primary source rather than a supporting mention.

The specificity matters. The AI appears to treat specific, documented outcomes as evidence of firsthand experience. Generic advice, no matter how accurate, reads as secondhand knowledge.

Citing Credible Sources in Your Own Content

This one creates a positive feedback loop. When you cite authoritative sources (peer-reviewed papers, official statistics, recognized industry reports), it signals to the AI that your content is well-researched and rigorous.

We analyzed citation behavior in the 100 frequently cited sources from our earlier study. On average, they cited 8-12 external sources per piece, with a strong skew toward primary and institutional sources. The poorly cited sources averaged 2-3 external citations, often to other secondary sources.

Citing well doesn't just help your readers. It signals to the AI that you're part of a trusted information ecosystem.

Author Credibility and Publisher Credibility

Trust in AEO operates on two levels: who wrote it, and where it was published. Both matter, but they matter differently depending on the topic.

The Byline Effect

We compared citation rates across three groups of content: pieces with a named author and visible credentials, pieces attributed to "Staff Writer" or a generic team credit, and pieces with no byline at all.

The named-author group was cited 2-3x more frequently than the no-byline group. The "Staff Writer" group fell in between, closer to no-byline than to named-author.

But the effect wasn't uniform across topics. For YMYL content (health, finance, legal), credentials were nearly essential. An MD after a name on a health article, a CPA on a tax guide, a JD on a legal explainer. Without those, the content was almost never cited, regardless of quality.

For general knowledge and lifestyle topics, credentials mattered less. A clear byline with a professional bio was usually sufficient, even without formal certifications.

The Institutional Halo

We compared citation patterns between institutional and independent sources covering the same topics at similar depth. Mayo Clinic vs. an independent doctor's blog. Harvard Business Review vs. an independent business writer. IEEE publications vs. independent tech bloggers.

The institutional sources were cited at significantly higher rates. Not because their content was always better (sometimes it wasn't), but because the institution's reputation transferred to the individual piece.

This matters strategically. If you're building authority from scratch, institutional affiliation is one of the fastest shortcuts available. Contributing to recognized publications, partnering with established organizations, getting quoted in mainstream media. These all borrow trust from entities the AI already recognizes.

It doesn't have to be permanent. Even a few guest contributions to established publications can create enough of a trust signal to get the AI to start evaluating your own site's content more seriously.

Cross-Platform Consistency

One pattern we noticed across frequently cited authors: their information was consistent everywhere. The bio on their website matched their LinkedIn profile matched their contributor pages on other publications. Same credentials, same affiliations, same areas of expertise.

This makes sense when you consider how AI training data works. The model has likely encountered these authors across multiple sources. Consistent information reinforces the author's identity and credibility. Inconsistent information (different titles, different credentials, conflicting bios) creates ambiguity that the AI may resolve by simply ignoring the source.

If you're building author authority, audit your presence across every platform. Make sure the story is the same everywhere.

Freshness: Trust Has an Expiration Date

Trust isn't static. Content that was trustworthy two years ago might not be today, and the AI knows the difference.

We tracked citation rates for 50 pieces of content that had been consistently cited over a six-month period, then stopped being updated. Within three to five months of going stale (no updates, no "last reviewed" changes), citation rates began to drop. Not all at once, but steadily.

The effect varied by topic type:

Fast-changing topics (technology, regulations, current events): freshness was critical. Content older than six months saw sharp drops in citation frequency.

Slow-changing topics (industry practices, established methods): content stayed viable for 12-18 months, but eventually declined without updates.

Evergreen topics (fundamentals, history, core concepts): freshness mattered least, but even here, a "last updated" stamp from within the past year outperformed undated content.

We also tested something specific: does simply updating the "last modified" date without changing the content help? Minimally. The AI appears to give some weight to freshness signals, but meaningful content updates outperformed cosmetic date changes by a wide margin.

The practical takeaway: build a content maintenance calendar. Review and update your highest-value content on a schedule, quarterly for fast-changing topics, biannually for slow-changing ones. And make the update visible. Show the "last updated" date. Add an editorial note about what changed. This signals active maintenance and ongoing commitment to accuracy.

E-E-A-T Reimagined for Answer Engines

If you're familiar with Google's E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness), you already have a mental model for quality signals. But in AEO, each dimension needs to be translated into something the AI can actually detect and verify.

Here's how we think about the translation:

Experience becomes documented firsthand involvement. In the SEO context, "experience" was somewhat abstract. In AEO, it means content that contains original case studies, field testing results, "we did X and here's what happened" narratives, and first-person professional observations. The AI can distinguish between someone explaining a concept from research and someone explaining it from practice, because the language patterns and specificity are different.

Expertise becomes credentialed authority. Visible credentials, institutional affiliations, published work, industry recognition. Not just "I know about this topic," but verifiable evidence of qualification. The more the AI can cross-reference your claimed expertise against external sources, the stronger this signal becomes.

Authoritativeness becomes external validation. Backlinks from other trusted sources, brand mentions in media, being cited by other content that the AI already trusts. Authority in AEO is less about domain metrics and more about whether other recognized entities in your field acknowledge your existence.

Trustworthiness becomes transparency signals. Author bylines, editorial standards, source citations, contact information, update dates. All the things that say "we have nothing to hide and here's how we produced this content."

The key difference from traditional E-E-A-T: in AEO, these signals need to be machine-readable, not just human-assessable. A human quality rater can read between the lines and infer expertise from writing style. The AI needs explicit, structured signals it can verify across sources.

Chapter Takeaway

Trust determines everything in AEO. It's not one factor among many. It's the foundation the entire system rests on.

The hierarchy looks like this:

Layer 1: Trust signals. Without these, nothing else works. Author credentials, transparent About pages, source citations, freshness indicators, institutional credibility. This is the entry ticket.

Layer 2: Entity coverage and content quality. Everything from Chapter 6. Complete topic coverage, hub-and-spoke architecture, depth over breadth. This is what differentiates you once you're past the trust threshold.

Layer 3: Technical optimization. Schema markup, content structure, the answer-first framework. This makes your trusted, well-covered content maximally extractable.

Most organizations work these layers in reverse. They start with technical optimization, move to content creation, and treat trust as an afterthought. The organizations that get cited consistently do it the other way around: trust first, then coverage, then optimization.

The practical version of this chapter is a sequence:

Month 1 (quick wins): Add author bylines to all content. Create or improve your About page. List credentials. Add "last updated" dates. Start citing authoritative sources in your content.

Months 2-3 (build foundation): Publish an original case study with real data. Get a team member quoted in an industry publication. Complete LinkedIn profiles for all content authors. Ensure cross-platform consistency.

Months 4-6 (compound authority): Publish original research or a proprietary survey. Pursue speaking opportunities. Build relationships with journalists and editors. Seek guest contributions to recognized publications.

Trust isn't built overnight. But the trust threshold isn't as high as most people assume. The baseline signals, bylines, credentials, transparency, source citations, are achievable for any organization willing to prioritize them.

You now understand what answer engines look for: entity coverage, content structure, and above all, trust. Part III of this series shifts from understanding to execution: what to do first, how to measure progress, and how to scale an AEO strategy that compounds over time.

Frequently Asked Questions

How is trust in AEO different from domain authority in SEO?

Domain authority in SEO is primarily a function of backlink quantity and quality. Trust in AEO is broader. It includes backlinks, but also author credentials, institutional affiliation, content transparency, freshness, cross-platform consistency, and whether your content cites credible sources. A site can have high domain authority but low AEO trust if it lacks visible author expertise and editorial transparency. Think of domain authority as one ingredient in the trust recipe, not the whole thing.

Can a new website build enough trust to get cited by AI?

Yes, but it requires a deliberate strategy. The fastest path we've seen involves three moves: first, ensure all baseline trust signals are present from day one (author bios with credentials, transparent About page, source citations). Second, publish original research or a documented case study early, because primary source material gets evaluated differently than synthesized content. Third, build external validation through guest contributions to established publications or getting quoted in industry media. We've seen new sites earn their first AI citation within three months using this approach.

Do I need formal credentials (MD, JD, CPA) to be cited on YMYL topics?

For high-stakes YMYL topics like medical diagnosis, legal advice, or specific financial guidance, formal credentials are nearly essential. The AI is extremely cautious with these topics because the cost of citing bad information is high. However, for adjacent YMYL topics (general wellness, personal finance basics, business law overviews), demonstrated professional experience and institutional affiliation can substitute for formal credentials. The key is that your expertise needs to be visible and verifiable, not just claimed.

How often should I update content to maintain trust?

It depends on the topic. For fast-changing subjects (technology, regulations, market data), review quarterly. For slow-changing subjects (industry fundamentals, established practices), review every six months. For evergreen content (core concepts, historical topics), an annual review is usually sufficient. The important thing is making updates visible: show the "last updated" date and note what changed. Meaningful content updates outperform cosmetic date refreshes significantly.

Does citing sources in my own content really help with AI citations?

Yes, and more than most people expect. Citing credible external sources (peer-reviewed research, official data, recognized industry reports) signals rigor and places your content within a trusted information ecosystem. In our analysis, frequently cited sources averaged 8-12 external citations per piece, while rarely cited sources averaged 2-3. It's not just about helping the reader. It's about signaling to the AI that your content is well-researched and connected to authoritative knowledge.

Is it better to publish on a major platform (Forbes, Medium) or build authority on my own site?

Both, in sequence. Contributing to established platforms borrows institutional trust and creates external validation for your name and expertise. But the long-term play is building your own site into a trusted source. Use platform contributions to accelerate trust-building, then direct that credibility back to your own domain through consistent authorship, cross-linking, and original research published on your site. The institutional halo from major publications can help the AI start evaluating your own content more seriously.

What's the minimum trust threshold to get cited? Is there a checklist?

There's no universal score, but based on our analysis, these are the minimum signals present in the vast majority of cited sources: a named author with visible credentials or professional bio, a transparent About page, publication and update dates, at least some external source citations within the content, and at least one form of external validation (a quality backlink, a media mention, a guest contribution). If you're missing more than two of these, trust is likely your primary barrier to getting cited, regardless of how good your content is.

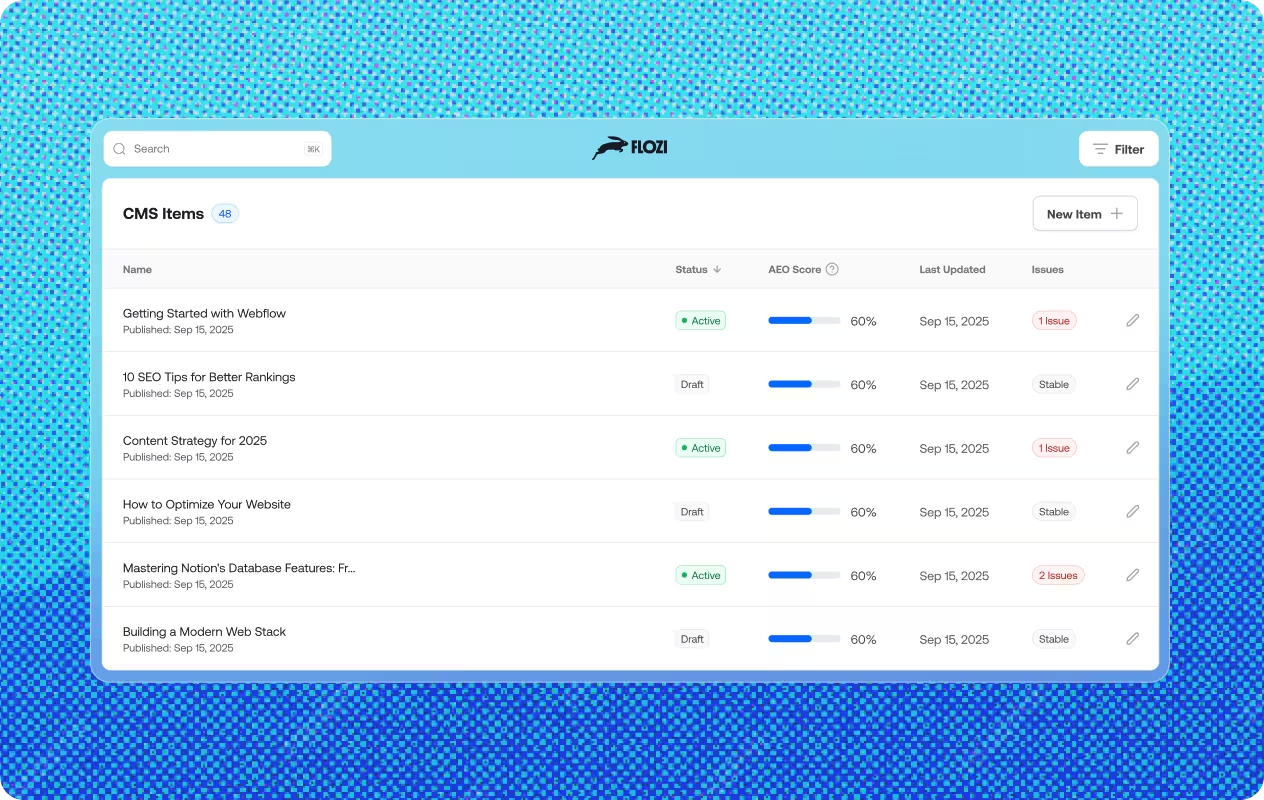

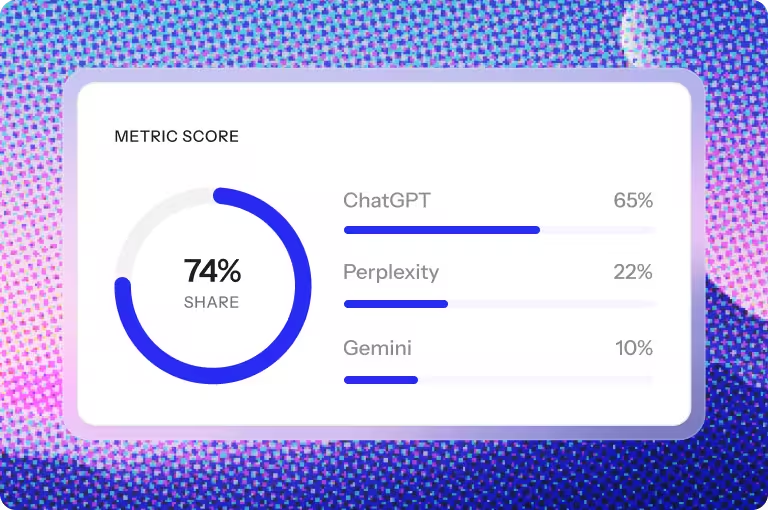

Get your content health score across ChatGPT, Perplexity, Gemini & Claude in 60 seconds.

Uncover deep insights from employee feedback using advanced natural language processing.

.avif)

%20(1).jpg)